GitHub Actions Day 19: Downloading Artifacts

This is day 19 of my GitHub Actions Advent Calendar. If you want to see the whole list of tips as they're published, see the index.

Yesterday we looked at how to upload artifacts as part of your workflow run, and then download them manually. This is incredibly useful for many scenarios, but I think that the more powerful part of using artifacts is to use them to transfer files between different steps.

For example: you might have a project that creates a binary on multiple platforms, uploads those binaries as artifacts, and then fans in to run a job at the end to aggregate those different binaries into a single package.

Or you might want to fan out instead -- have a job that creates a single artifact, then run multiple jobs on different platforms to test that artifact.

Here I have a workflow that tests my native code: first, I build

my native code test runner, which uses the

clar unit test framework so

that it compiles a single binary named testapp with all my unit tests.

That binary gets uploaded as an artifact called tests. Then I'll

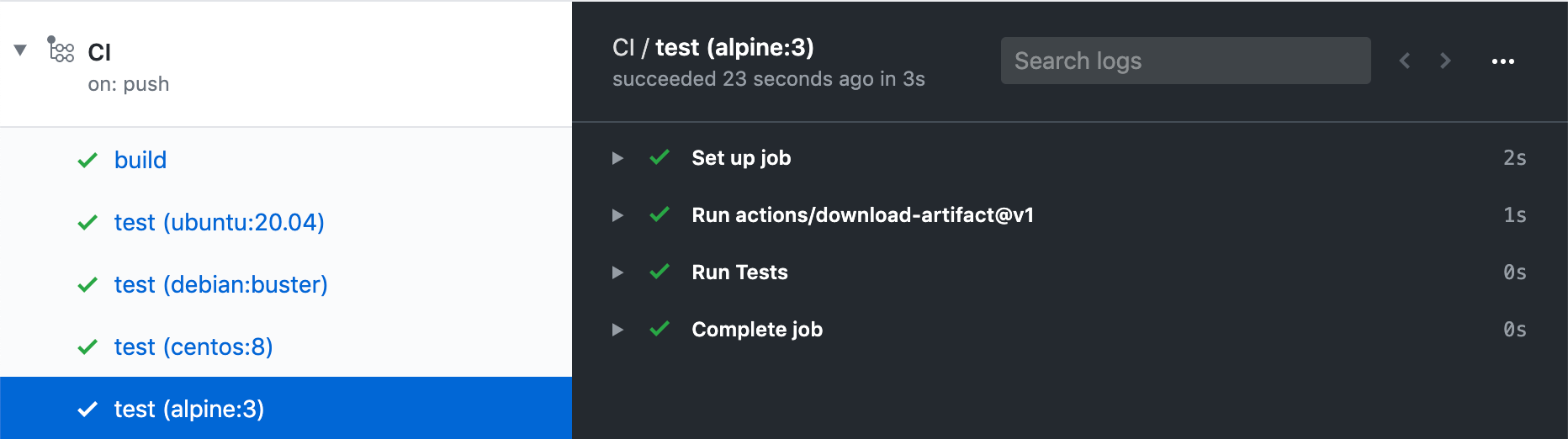

create a matrix job that depends on the first build job. It will

set up a matrix to execute within the container, using the latest

versions of Ubuntu, Debian, CentOS and Alpine. Each job will download

the tests artifact produced in the buid job, then set set the testapp

as executable (because artifacts don't preserve Unix permissions) and

finally, run the test application.

When I run this, the build will produce an artifact, and when that build completes, my test jobs will all start, download that artifact, then run it.

You can see that uploading artifacts is useful to produce build output that you can download and use in subsequent build steps.