Advent Day 23: Removing Large Binaries with LFS

This is day 23 of my Git Tips and Tricks Advent Calendar. If you want to see the whole list of tips as they're published, see the index.

Earlier this month I talked about Git Large File Storage (LFS) and how you can use it to avoid checking in large files. It will keep those files in a separate area, parallel to (but not actually part of) your git repository, where they have a different storage contract that's efficient for large files.

But Git LFS needs to be set up before you add the binaries to your repository - once they've been checked in, they're in history.

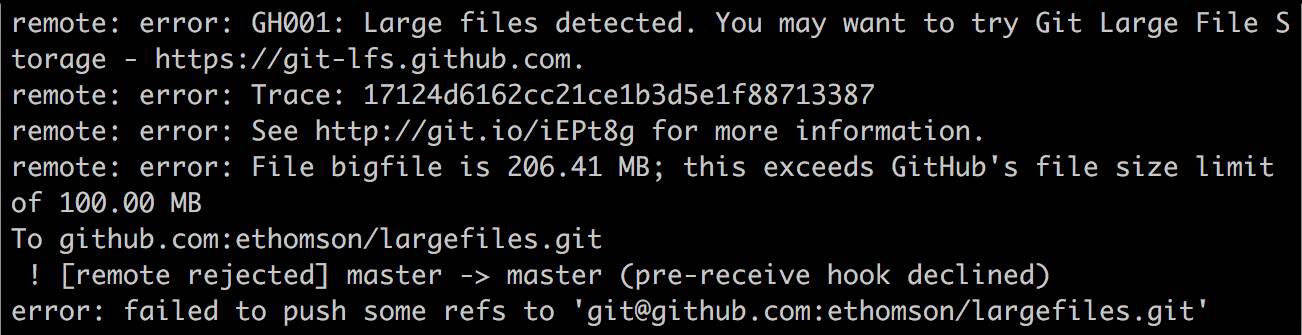

This is especially important since some Git hosting providers put limits on the size of the repository that you can push. Once you have 100 MB of binaries in your repository, even if you start using Git LFS after the fact, your repository is still too large to push, because those existing large files are still there.

So what do you do if you've already added binaries to your repository? How do you get them out?

You're going to have to rewrite history to remove them, and add them with Git LFS retroactively.

Rewriting history, eh? If warning bells are going off in your head: good. They should. This is a bit of a tricky proposition. (But a regrettably unavoidable one in this case.) It's not exactly dangerous, but you will want to be careful, and to coordinate this with your team.

There are a few tools that you could use to remove large blobs from your

repository: you could use filter-branch, which will walk through history

and run a command to rewrite each commit. This is powerful but difficult

and complex, especially if you have to deal with multiple branches. You

could use BFG repo cleaner,

which is a huge usability improvement over filter-branch but requires a

separate install. Recently, Git LFS added migration support built-in to

the tool.

Here's how to migrate a repository:

-

Tell your team to stop working for a while. Yes, really; you're not going to want to do this near the end of a sprint. Ideally, you'll do this on a weekend, when nobody's doing any work, and you can shut down the repository temporarily. (How long? You might want to do a dry run of step 5 to find out - you can do that while people are working to get an idea of how long it will take.)

Your users should push any work in progress branches to the server, even if it's not ready to be merged into master, having it on the server ensures that they'll get included in your rewrite.

I'd encourage you to set up protected branches or branch policies to keep the branch closed temporarily, just to make sure that you don't have any conflicts.

-

Clone a new copy of the repository somewhere locally. You can do a mirror clone, which will clone the remote into a bare repository locally so that you don't check out all your large files unnecessarily. It will also get all the remote branches and tags, and set up remote tracking branches so that you can safely perform a force push.

git clone --mirror git@github.com:ethomson/repo.git -

Optional, but encouraged: back up your repository. Maybe physical media to an off-site location, or pushing a zip up to OneDrive or Dropbox. If you're confident about your backup policies, maybe you could skip this, but when you're about to undertake a repository rewrite, you might also want to think about a backup.

You could also push a backup branch to quickly recover from if things go horribly wrong. They shouldn't, of course, but this is your source code, right?

git push origin master:master_before_lfsShould do the trick.

Repeat for any important integration branches.

-

Delete any local pull request test merge branches. Hosting providers like GitHub and Azure Repos store their pull request information as hidden branches. You'll normally never see these, since they're in a special namespace, but a mirror clone will pull them down.

You wouldn't want to rewrite these and try to push them (in fact, you wouldn't be able to), so you should delete them to avoid unnecessary work during rewriting and to simplify your push.

A quick bash command will take care of that, iterating each of the pull request references in the

refs/pullnamespace, and then deleting them:git for-each-ref --format='%(refname)' refs/pull/ | xargs -n 1 git update-ref -d(In case you were wondering from yesterday's tip, this is exactly the sort of thing that I'd rather do in a proper language instead of piping things into

xargs. Ugh.) -

Rewrite your git history to add the files into the LFS area for all your existing commits. The

git lfs migratetool can take care of this for you, and you can either specify the names of the files that you want to import, or let it detect the large files automatically.To remove the

*.datfiles out of history, converting them to LFS files in every branch:git lfs migrate import --everything --include='*.dat'Or to let the tool find and migrate all your large files, you can omit the

--includeoption:git lfs migrate import --everythingThis will configure the

.gitattributescorrectly at every commit, and place all the specified (or detected) files into LFS. Thelfs migratetool will have rewritten your branches with a series of new commits that should be identical to your previous branches except the large files will now be added to your large file storage. -

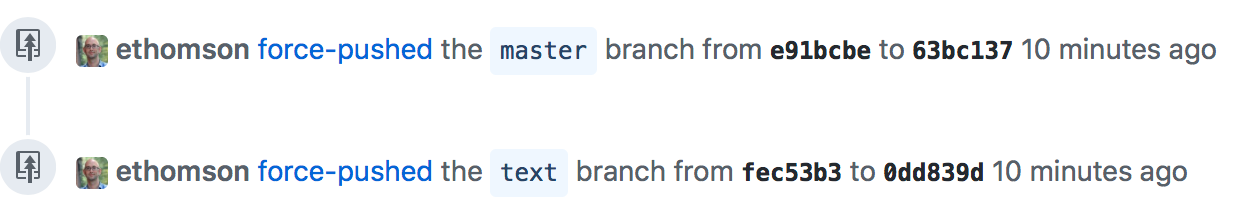

Now you can force push the branches that you rewrote. I strongly encourage the safe force push using:

git push --mirror --force-with-leaseThis will push all the rewritten upstream branches, including any branches that were opened for pull requests, and it will update all the branches at once. This is important, because if you only pushed the master branch, or your other integration branches, any pull requests opened against them will suddenly make no sense.

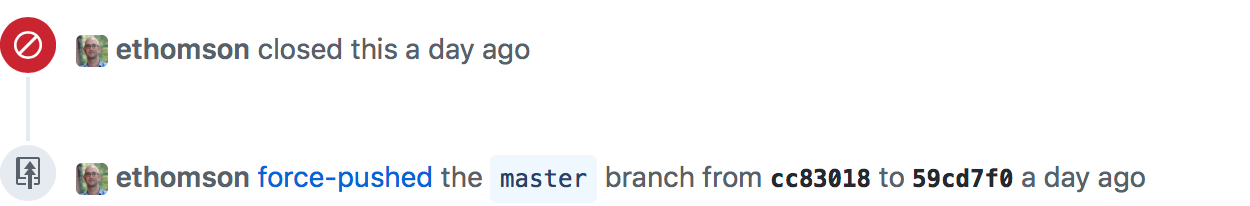

Fundamentally, you've rewritten all of history, so your open pull requests would now no longer share a merge base with the master branch. This simply can't be merged, so GitHub will just close your pull requests:

This doesn't happen, though, if you also push rewritten pull requests branches. In that case, GitHub notices that they were both force-pushed and recalculates the merge properly. So any open pull requests stay open.

-

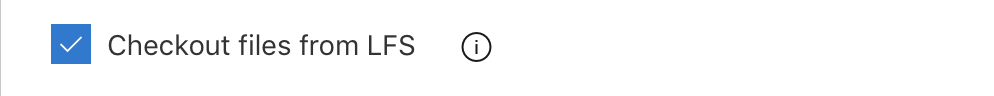

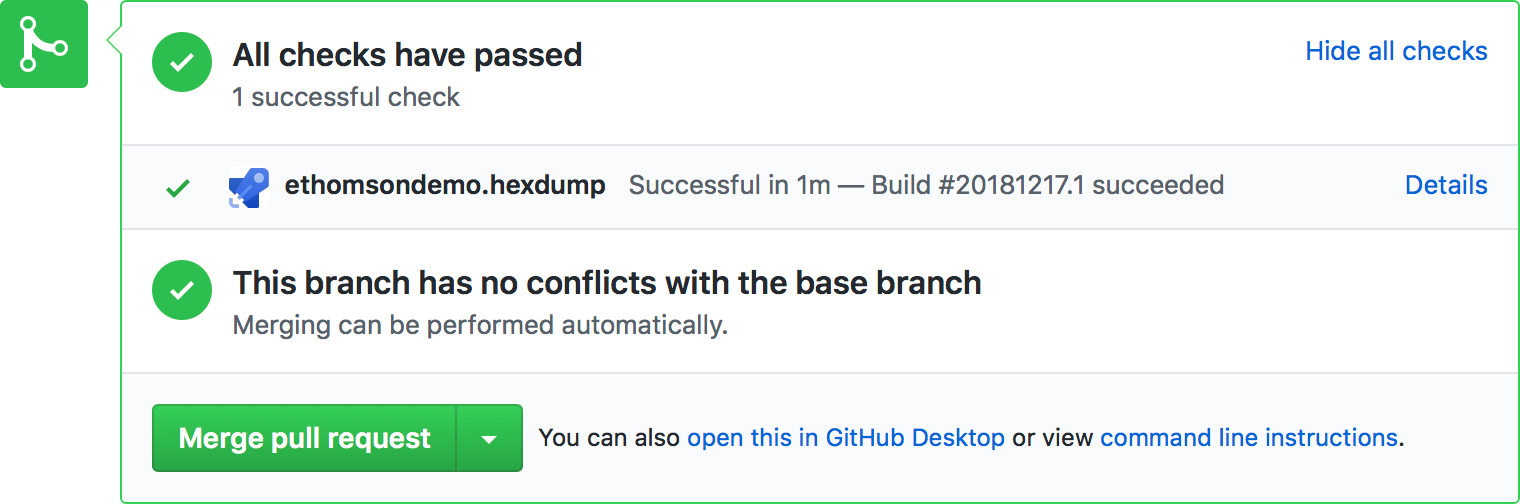

Run continuous integration builds, and validate that they're working properly. Your CI system should include Git LFS, though it may require you to explicitly enable it as an option. There's a simple checkbox in the "get sources" configuration of Azure Pipelines:

Once you've enabled that, queue a build on your master branch (and any other important integration branches) and make sure that things are working correctly.

-

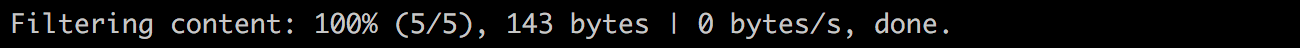

Clone the repository yourself and make sure that things are working correctly; you should see the message

Filtering contentas the last line of the clone output. This indicates that LFS is operating (it runs as a git "filter").

You should also work with your repository to make sure that it builds locally. Although you should have a high degree of confidence from the continuous integration builds, you'll want to ensure that things are working locally as well. Check out other integration branches, if you have them, or pull request branches, to ensure that things worked well during the migration across all branches.

-

Get your users to reclone their repositories. I am generally loathe to encourage people to ever do this - a lot of people follow the delete and re-clone strategy when something goes wrong in their work and it's quite an anti-pattern. There are really no problems in Git that are so hairy that you need to get rid of the repository and start over; solving these problems without doing that will really level-up your Git knowledge.

This is the exception - we've rewritten every branch and there's no simple way to update. Instead, cloning a new copy of the repository is the right way to go. (Bonus: it's now much quicker to clone the repository since you don't have all those messy binaries.)

Note that if you pushed any backup branches, those binaries still exist on the remote, so you'll need to delete those backup branches before that space is reclaimed on your hosting provider.

This is actually surprisingly straightforward. There's one gotcha though, and that's if you use a fork-based workflow.

If you're working on an open source or inner source project where users fork the main repository, make changes in a branch in their fork, then open a pull request from the fork back to the main repository, then you'll have a difficulty with this workflow. That's because when you did the rewrite, you weren't able to update the forked repositories.

In that case, when you do the force push, those pull requests will be closed, since there's no common history between the target branch and the pull request.

If you do have pull requests from forks, you should create branches for them in the main repository to ensure that they get rewritten. Users can then update their fork after the rewrite.

But ultimately, there are surprisingly few "gotcha"s in this process, and it will help you manage the large files in your repository.